Testing new frameworks & languages: how to separate the grain from the chaff

Captain's log, stardate d503.y37/AB

Captain's log, stardate d503.y37/AB

Technology is evolving very fast. Every 6 months you have a new cool framework, language or technology popping up on your Twitter timeline and RSS feeds. It seems that you need to be always riding the wave to be a successful developer.

At MarsBased, we take very seriously the decision of the technologies we use in our projects. We are a laser-focused specialised company, so we can't afford to use new technologies just because they're trending on Reddit.

With this post, we want to share our internal process to decide what technologies we add to our tech stack.

Before starting, there are some conditions that a new technology has to meet before we even take the time to analyse it in depth.

They are maturity, available documentation and community.

Maturity is a matter of time. We consider a technology to be mature when it has been around for at least 2 years and the commit frequency still remains stable.

We also take into account if the technology has reached a stable version. There are some technologies that are indefinitely on their 0.9x version. This gives them an excuse to break their API whenever they want. Ultimately, this leads into hard to maintain applications when they reach production stage.

Sometimes, we break this rule if the technology looks promising enough. On certain situations, we will even use them in production.

For example, when faced with the decision of starting a new big Angular project last summer, we had to decide between using AngularJS or the new Angular 2.0 which was still in Release Candidate phase. We took the risk and after more than one year of development, we feel that we made the right choice.

Getting to know the community of a certain technology is a mix of factors. Instead of relying only on the buzz a certain technology is

gaining in the social networks, we like to also consult some other sources. The most reliable one is StackOverflow as it gives you insights on how many people are using the technology on real projects, what kind of problems they are facing, how many experts are answering questions and the quality of those answers.

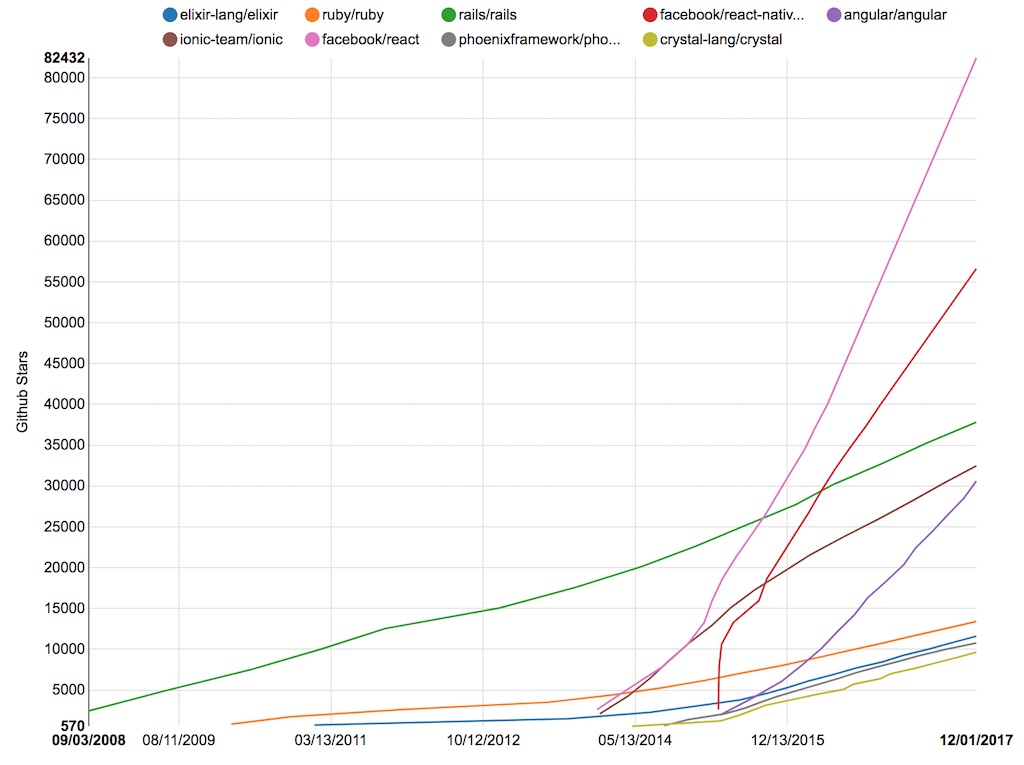

Also, we use Github Stars to measure the hype level of a technology. We don't want to use technologies that had a very big hype and then the community abandoned them and moved on. We want technologies gathering sustainable attention over time. There are plenty of tools to get this information. We use Star History which does exactly what its name states.

Finally, we also analyse the available technical documentation. Nobody should invest their time learning something that it's not well documented. If you want companies and professionals to start adopting your technology, you need to care about them. The best way to show that you care is by having a concise, comprehensive, easy to consult and well-written documentation.

We follow all these steps and we like to take a conservative approach because as a development consultancy, we are building software for others and we care a lot about the sustainability and the maintainability of their projects in the long term. Also, we want to make sure that our developers will work with something that they will feel comfortable with.

After we have chosen the technology, it's time to evaluate it. We will explain it using a real MarsBased example.

This is how we are testing Elixir and the Phoenix Framework to know if they can be a good addition to our tech stack. This is one of the most complex scenarios that you could face when testing a new technology. It is not just adding a Gem or an external dependency to your project. We are approaching a new programming language with a different paradigm (functional vs object-oriented) and a full stack web development framework built on top of it.

We chose Elixir and Phoenix because they are both mature technologies (Elixir appeared in 2011 and Phoenix in 2014) and they have an awesome community behind. Part of the Ruby community that we know very well is already using Elixir for some of their projects, and they have done a very good job with the Elixir standard library documentation and the Phoenix Getting Started guides.

Our evaluation process starts with the framework documentation, even when the logic says that you should learn the programming language first. We are taking the inverse order because we want to evaluate how difficult is for a new developer without prior experience to enter into a Phoenix project and start developing small features and bug fixes.

If we are unable to follow the most basic guides without learning in depth the programming language first, then it's an indicator that it will take us a lot more time and effort for our developers to master it.

Remember that we don't want to be experts in the technology yet. We are only checking if it can be a good addition to our stack.

Then, we follow the Getting Started guide, step by step. But, instead of implementing the simple/basic/naive examples proposed, we use a real project we previously developed with a technology we are experts on (in our case Ruby on Rails).

At the end of the process, we aim to have the same project rebuilt with the new language / framework. This approach will reveal more issues than any regular guide could cover. Trust us!

So, in our Elixir & Phoenix adventure, the first step is to add a static page, which involves

adding a new route, a new controller, a new view and a new template.

In our Rails project, we have static views too, so we start by creating the Terms & Conditions page.

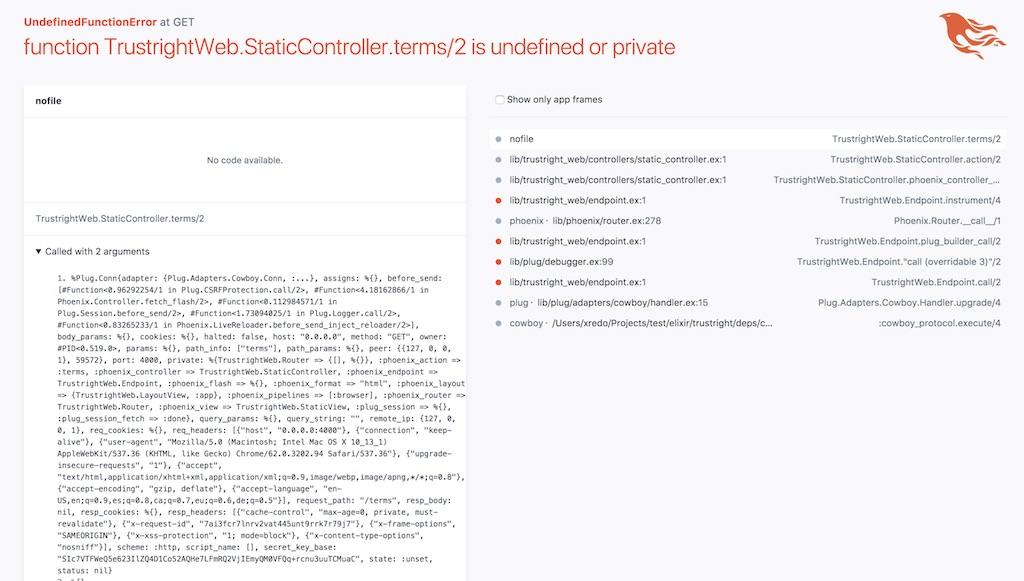

We create a StaticController module to have all static pages in one place with a terms method to render the view, and we launch the development web server. Now is when we start seeing that this testing approach reveals more than the actual guide.

I am sure that an experienced Elixir developer would have found the error in no time. But after googling around for a bit, I couldn't find a solution to it, giving me the perfect opportunity to evaluate the error resolution process with the framework tools.

On the one hand, we could check the Phoenix error page and learn how to navigate the project stack. As it happens with Rails, it shows the code around the error line and provides all kinds of debugging information.

On the other hand, the error allowed us to try the Elixir debugger and see how mature is it comparing it to the Ruby equivalent.

Do you want to know that was causing the problem?

We found that, in Phoenix, controllers cannot be named Static because there is a conflict with the name of the static_path method being used to link the stylesheets to the page. We changed its name to StaticPagesController and voila!

Once this problem was solved, we analysed the Terms & Conditions Rails template that I was using as an example and there were two additional requirements. The first one was I18n and the other was using content_for to modify the meta tags/body classes of the page layout from a template.

As a European consultancy, most of our projects require internationalization so it is very important for us that our framework of choice supports it well.

We discovered that the preferred way to manage I18 by Phoenix is Gettext (something that is not mentioned in the guide). This is important because we are used to YAML-based translations, which differs greatly from the Gettext philosophy. In our case, our translation tools are compatible with both Gettext and YAML, but you should check yours.

As for the content_for helper, Phoenix doesn't provide anything similar because Phoenix renders the layout first and the template later. We found several blog posts describing alternative solutions and some Github issues in the official repository covering the subject.

The most accepted solution seems to be using the render_existing helper. This gave us the chance to check the community supporting the framework and different solutions for everyday requirements that we find while developing our web applications.

So, by completing the first page of the Phoenix guide using our real application as an example we could learn about the following:

A 3 minutes tutorial became a 2 hours adventure that gave us a deeper understanding of the technology.

We still haven't finished our Elixir / Phoenix evaluation. It will take us some more weeks until we can reach a conclusion. There are plenty of topics that we still need to cover:

Just to name a few.

Our aim is not to replicate all the former application. We are going to rewrite as many parts as required until we find ourselves confident enough with the new technology. With the condition that all the rewritten parts need to be fully functional pieces of software.

You just need to take one single thing into account when using this methodology. Try to be open to the paradigm change offered by a new technology.

In our example, Ruby is very different than Elixir. Don't try to copy the same exact implementation used in your Rails application to the Phoenix one. It's not going to work and won't reveal any useful conclusion. You should follow the original code while embracing the new technology and its associated paradigm.

We do not only use this methodology to choose a new programming language or a development framework. We also use it to choose new libraries to add to our projects, or to introduce external dependencies like Search Engines, Caching systems, Monitoring software or Queue management systems.

Just for your curiosity, Elixir is not the only technology that we are evaluating. We are also paying a lot of attention to Crystal, a compiled language with a Ruby-inspired syntax and static typing, React Native, which could bring important performance improvements to our hybrid mobile apps and new Node.js frameworks to be more productive in the projects where the client wants to develop JavaScript-based backend solutions.

In this post, we want to share how to create the boilerplate to build React applications with parcel.

Read full article

We would like to announce that we're officially adopting Node.js as a de facto development environment for our backend projects, in addition to Rails.

Read full article

The more we grow, the more technologies we can cover. We're happy to announce that we're offering React development services from now on!

Read full article